Enterprise AI is entering a phase where the hardest problem is no longer building models, but running them in the real world at scale. That shift is driving a wave of infrastructure-focused partnerships, and the latest comes from Impala and Highrise AI, who have announced a strategic collaboration aimed at solving what they describe as the industry’s most urgent constraint: execution.

The companies are positioning their partnership as a vertically integrated approach to enterprise AI infrastructure, combining Impala’s high-throughput inference stack with Highrise AI’s high-availability compute layer. The infrastructure backbone is further strengthened by access to gigawatt-scale energy supply through Hut 8’s platform, which underpins Highrise AI’s GPU infrastructure strategy.

Rather than focusing on model innovation alone, the partnership is designed to address the production layer where many enterprise AI initiatives struggle to scale. Organizations are increasingly discovering that deploying models is relatively straightforward compared to sustaining performance, controlling costs, and ensuring reliability under production load.

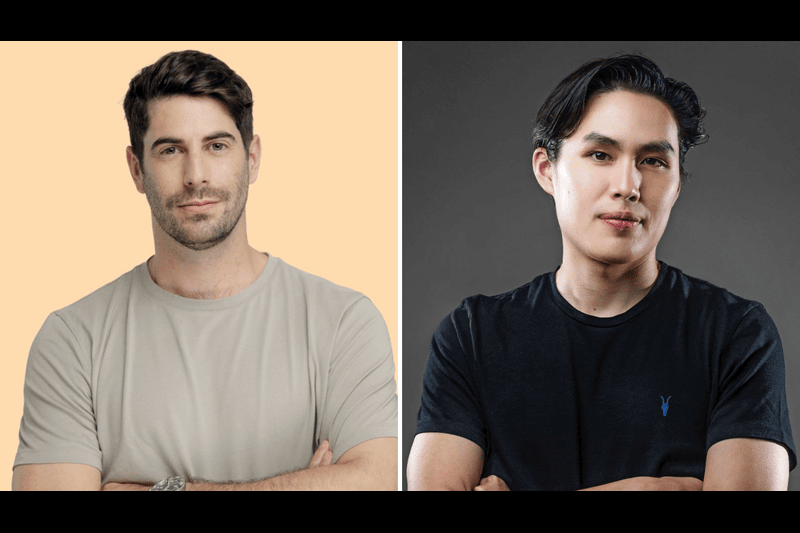

“Enterprises are no longer limited by model capability; they’re limited by execution,” said Noam Salinger, CEO of Impala. “By pairing our inference stack with Highrise AI’s infrastructure, we’re enabling organizations to run AI at the scale and efficiency that real-world applications demand.”

A Shift From Model-Centric to Infrastructure-Centric AI

The framing of this partnership reflects a broader transition across the AI ecosystem. While early enterprise adoption cycles were dominated by model evaluation and experimentation, the bottleneck has moved downstream into operations.

Impala’s inference stack is designed specifically to maximize throughput, with a focus on increasing tokens per second and improving utilization per machine. On the other side, Highrise AI provides scalable compute infrastructure designed to reduce cost constraints and enable sustained high-volume workloads.

Together, the companies are targeting a problem set that includes throughput limitations, rising inference costs, and infrastructure fragmentation across deployments.

Economic Pressure Driving Infrastructure Consolidation

One of the central themes of the partnership is economics. As enterprises move from pilot projects to full production workloads, inference costs scale rapidly, often becoming the dominant expense in AI systems.

Impala’s architecture is designed to improve machine-level efficiency, while Highrise AI’s infrastructure layer focuses on lowering compute costs at scale. The combined result is a system intended to reduce cost per inference and enable more predictable budgeting for enterprise AI deployments.

“We’re at an inflection point where the enterprises that win will be the ones that can run AI reliably and affordably at scale,” said Vince Fong, CEO at Highrise AI. “That’s what this partnership will deliver: not just better infrastructure, but a fundamentally better economic model for AI in production.”

Security and Industry Readiness

Beyond performance and cost, the partnership also emphasizes enterprise security requirements. The joint architecture is designed for environments where data protection and regulatory compliance are critical.

Impala operates within single-tenant environments embedded in customer infrastructure, while Highrise AI provides confidential compute capabilities designed to protect sensitive workloads throughout the inference pipeline. This is particularly relevant for regulated industries such as healthcare and financial services, where data governance is non-negotiable.

Where the Partnership Is Heading

The companies are positioning the collaboration as part of a broader shift in AI infrastructure: from experimental compute environments to production-grade systems capable of handling sustained enterprise demand.

As AI adoption accelerates across industries, the infrastructure layer is becoming as strategically important as the models themselves. Impala and Highrise AI are betting that the next phase of competition will be defined not by who builds the most advanced model, but by who can execute AI reliably at scale.

“AI is entering a new phase that is defined by scale, reliability, and operational impact,” Salinger added. “Together with Highrise AI, we’re building the infrastructure foundation that makes that future possible.”