In every organization, regardless of size, industry, or maturity, workflows quietly determine outcomes. They dictate how information flows, how decisions are made, how quickly teams respond, and ultimately how value is delivered to customers. While strategy defines direction, workflows determine execution. When workflows are unclear, fragmented, or inefficient, even the strongest strategies fail to translate into consistent results.

Many businesses attempt to address performance challenges by introducing new tools, hiring additional staff, or restructuring teams. While these interventions may offer temporary relief, they often fail to resolve the underlying issue: poorly designed or poorly understood workflows. Over time, this leads to operational drag, employee frustration, and missed opportunities.

Sustainable improvement begins with clarity. Understanding how work actually moves through the organization—where it slows, where it breaks down, and where it adds value is the foundation for meaningful optimization. This article explores a structured approach to improving performance through mapping, measuring, and refining core business workflows in a way that supports both efficiency and long-term adaptability.

Understanding Core Business Workflows

Core business workflows are the repeatable sequences of activities that enable an organization to operate and deliver value. They span functions such as sales, marketing, finance, operations, customer support, and product development. Examples include lead-to-cash, procure-to-pay, order fulfillment, employee onboarding, billing, and issue resolution.

What distinguishes core workflows from peripheral processes is their impact. They influence customer experience, revenue realization, cost control, and compliance. Despite their importance, many organizations rely on undocumented or outdated representations of these workflows, often embedded in institutional knowledge rather than formal systems.

Core business workflows typically share several defining characteristics:

- They cut across multiple teams or functions rather than remaining siloed

- They are repeatable and high-frequency in daily operations

- They directly influence revenue, cost control, risk, or customer experience

- They tend to accumulate complexity as the organization scales

These traits explain why even small inefficiencies in core workflows can create disproportionate operational impact over time.

As organizations grow, workflows tend to accumulate complexity. Additional approvals, handoffs, and exceptions are layered onto existing processes to manage risk or accommodate growth. Without deliberate redesign, this complexity erodes speed, accountability, and consistency, making it increasingly difficult to maintain performance at scale.

Distinguishing Core Workflows From Supporting Processes

Not all workflows deserve the same level of attention. One of the most common mistakes organizations make is treating every process as equally critical. In reality, core workflows differ from supporting processes in both impact and risk.

“Core workflows directly enable value creation or value capture. If they slow down or fail, customers feel it immediately, revenue is delayed, or compliance is compromised,” explains William Fletcher, CEO at Car.co.uk. Supporting processes, while necessary, typically influence internal efficiency rather than external outcomes.

Distinguishing between the two helps leaders focus optimization efforts where they matter most. By prioritizing workflows that sit closest to customers, cash flow, or regulatory exposure, organizations ensure that improvement initiatives deliver tangible business results rather than incremental internal wins.

Why Workflow Visibility Matters More Than Ever

Modern organizations operate in environments defined by volatility, distributed teams, and heightened customer expectations. In this context, workflow opacity becomes a serious operational risk. When leaders lack visibility into how work flows, decision-making becomes reactive rather than intentional.

“Poor visibility also creates inconsistency,” says Sharon Amos, Director at Air Ambulance 1. Different teams may execute the same workflow in different ways, leading to unpredictable outcomes and uneven service levels. Over time, this inconsistency damages trust—both internally among teams and externally with customers.

Visibility enables alignment. When workflows are clearly documented and shared, teams understand not only their own responsibilities but also how their work contributes to broader outcomes. This shared understanding is critical for coordination, accountability, and continuous improvement.

Mapping Workflows: Creating an Accurate Picture of Reality

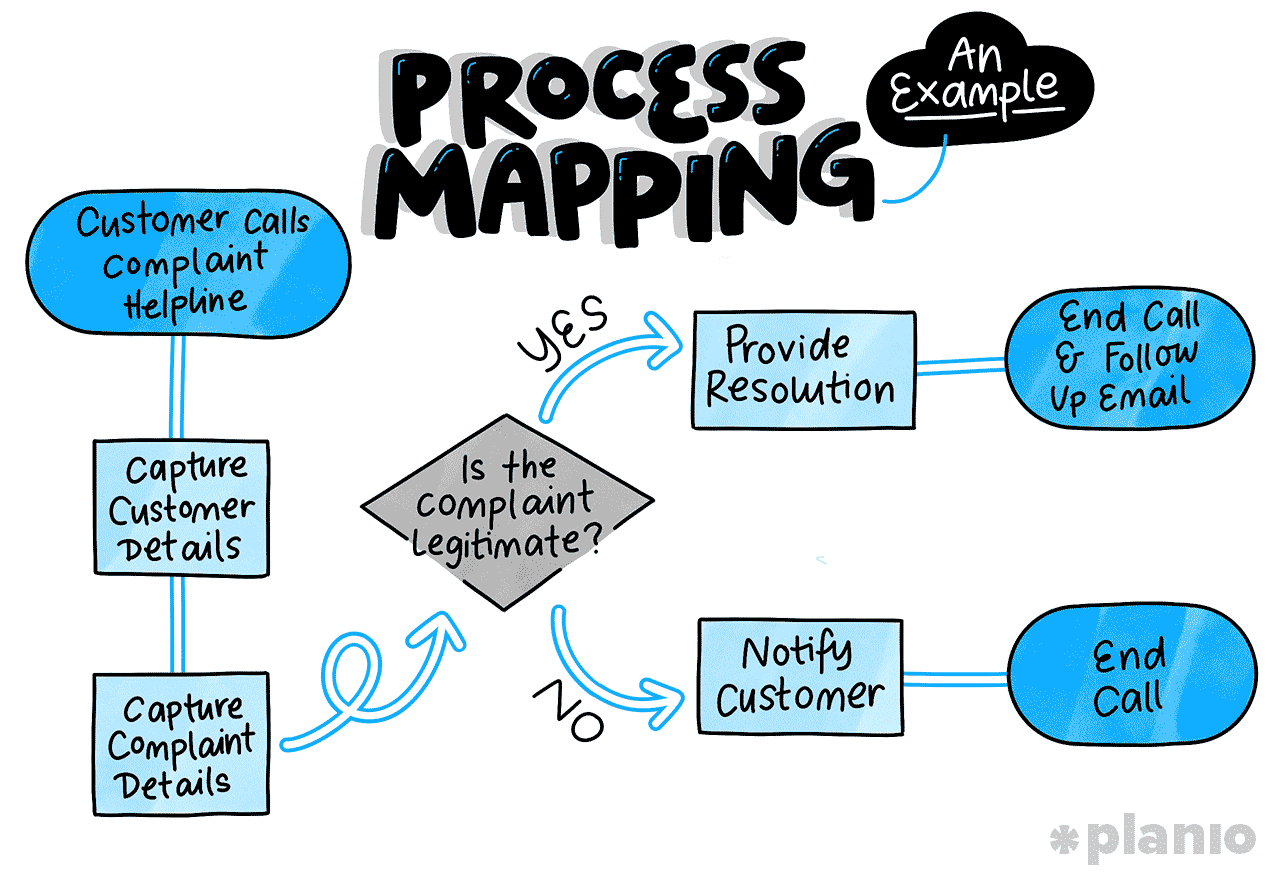

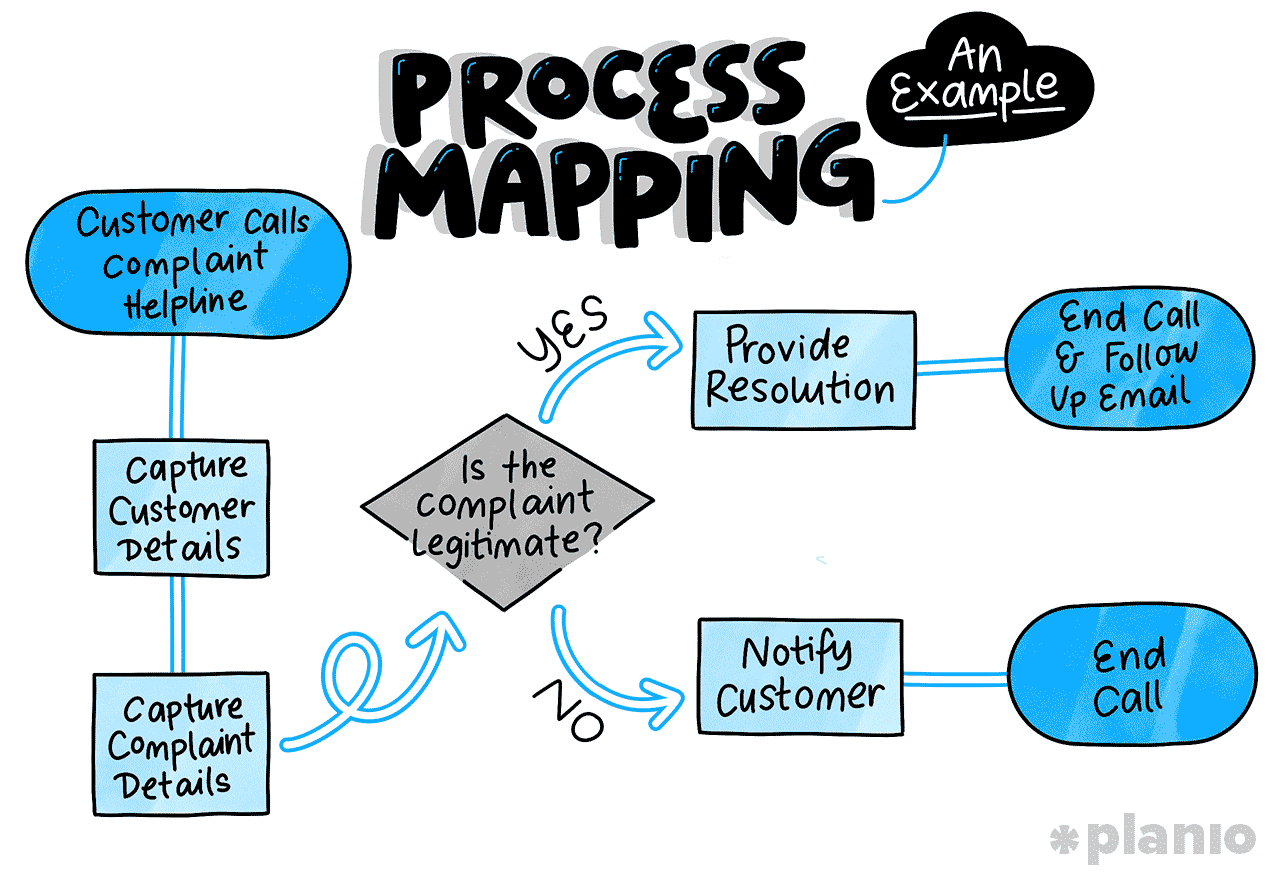

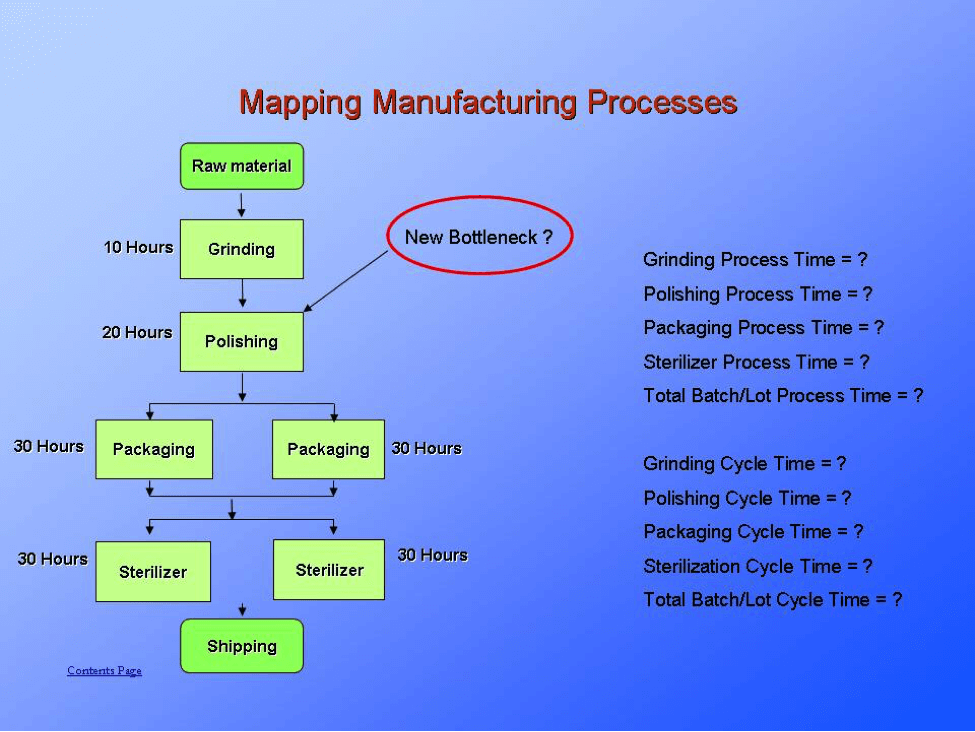

Workflow mapping is the process of documenting how work actually happens, not how it is supposed to happen. This distinction is critical. Idealized process diagrams often omit informal steps, workarounds, and decision delays that define real-world execution.

Effective mapping begins with selecting a high-impact workflow and assembling cross-functional participants who perform the work daily. Their firsthand knowledge ensures that the map reflects reality rather than policy. Mapping should capture triggers, inputs, decision points, handoffs, tools used, and outputs across the entire lifecycle of the workflow.

A well-constructed workflow map should clearly illustrate:

- The trigger that initiates the workflow

- Key activities and decision points along the path

- Handoffs between roles, teams, or systems

- Tools or platforms used at each stage

- Outputs and downstream dependencies

This level of visibility ensures the map reflects operational reality rather than theoretical design.

Choosing the Right Level of Detail in Workflow Mapping

One of the most common challenges in workflow mapping is determining the appropriate level of detail. Maps that are too high-level fail to reveal operational friction, while overly detailed maps become difficult to interpret and maintain.

“The right balance focuses on decisions, handoffs, and delays. These elements typically account for the majority of inefficiency and risk within workflows,” explains Dana Ronald, CEO of Tax Crisis Institute. Routine tasks can often be grouped, while exceptions and approvals should be explicitly documented.

Importantly, workflow maps should be treated as living artifacts. As processes evolve, maps must be updated to remain relevant. Maintaining this discipline ensures that mapping remains a practical tool rather than a one-time exercise.

Common Pitfalls in Workflow Mapping

Organizations often undermine mapping efforts through narrow or siloed approaches. Mapping within a single department rarely captures end-to-end complexity, particularly for workflows that span multiple teams.

Another pitfall is treating mapping as a compliance exercise rather than a diagnostic one. When participants feel pressure to present workflows in a favorable light, critical issues remain hidden. Psychological safety and leadership support are essential for honest documentation.

Finally, mapping without intent leads to stagnation. Workflow maps should exist to inform measurement and improvement. Without clear next steps, even the most accurate maps fail to deliver value.

The Role of Cross-Functional Collaboration in Workflow Design

“Because core workflows span multiple teams, no single function has complete ownership of how they operate. Sales may initiate a workflow, operations may execute it, finance may validate it, and customer support may deal with the consequences when it breaks down,” explains Beni Avni, founder of New York Gates.

Effective workflow design, therefore, requires deliberate cross-functional collaboration. Mapping and redesign sessions should include representatives from every stage of the workflow, ensuring that decisions reflect end-to-end realities rather than local optimization.

This collaborative approach also builds shared accountability. When teams understand how their actions affect downstream outcomes, friction decreases and cooperation improves. Over time, this shared ownership becomes a powerful driver of operational maturity.

Measuring Workflow Performance: From Activity to Outcomes

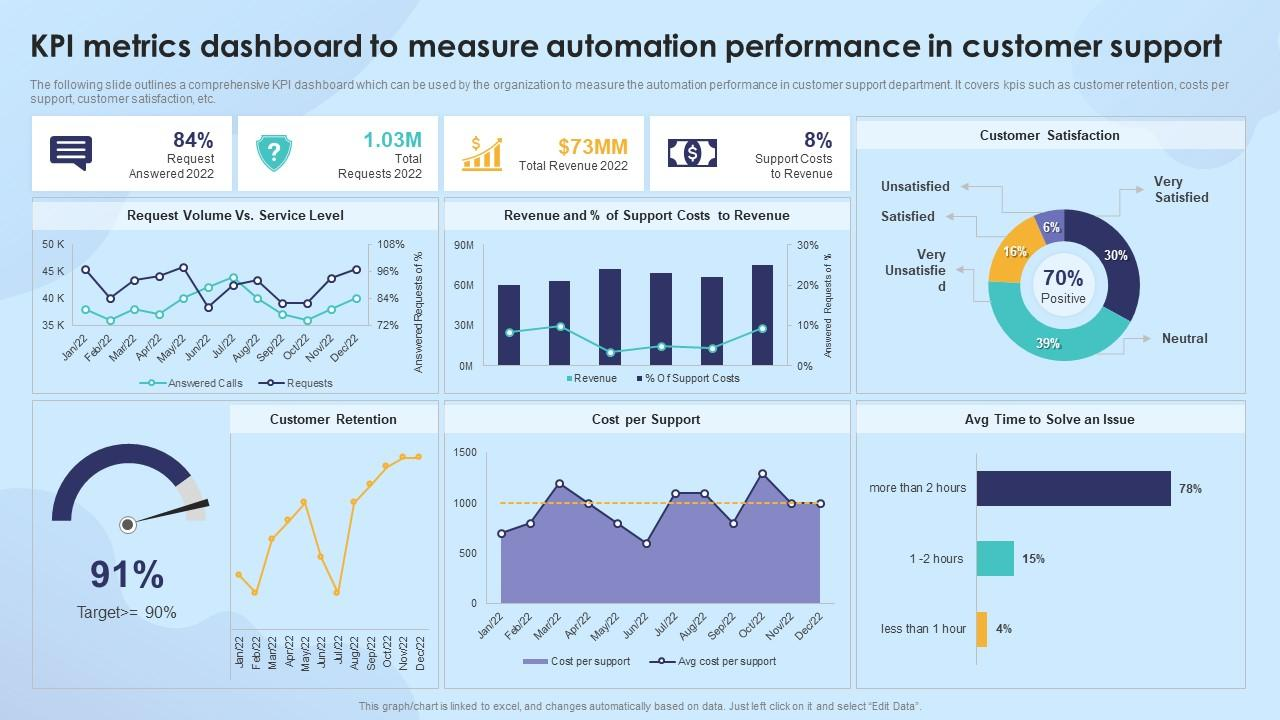

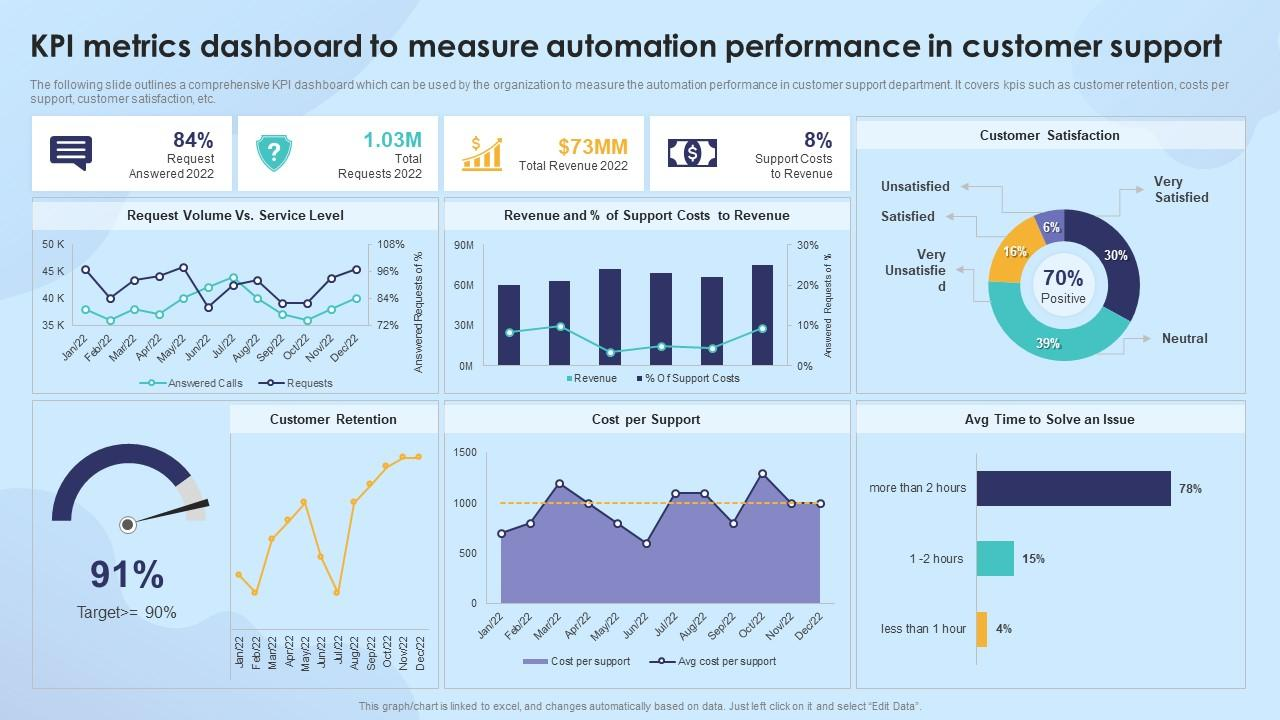

Mapping provides visibility; measurement provides insight. Once workflows are clearly defined, organizations can evaluate how effectively they perform. Measurement shifts conversations from anecdotal frustration to objective analysis.

Common indicators used to assess workflow performance include:

- End-to-end cycle time

- Error or defect rates

- Rework frequency

- Cost per transaction or case

- Customer or internal stakeholder satisfaction

Establishing baseline performance is essential. Baselines provide context for improvement efforts and prevent misinterpretation of results. Without them, it becomes difficult to determine whether changes represent real improvement or simply shift work elsewhere.

Linking Workflow Metrics to Business Performance

“Workflow metrics create real value only when they are directly connected to broader business outcomes. On their own, indicators such as cycle time, error rates, or throughput provide limited insight,” explains Tom Bukevicius, Principal at Scube Marketing. Their importance becomes clear when leaders understand how changes in these metrics affect revenue, cost structure, risk exposure, and customer experience.

For example, faster cycle times can improve cash flow by accelerating revenue recognition, while reduced error rates may lower compliance risk, rework, and operational cost. When these connections are explicit, workflow performance moves from an operational concern to a strategic lever.

This linkage also enables better prioritization. Not all workflows deserve equal attention or investment. Metrics help leaders identify which workflows have the greatest impact on business performance and where improvement efforts will deliver the highest return.

In practice, effective organizations use workflow metrics to:

- Connect operational performance to financial outcomes such as revenue, margin, and cash flow

- Identify workflows that directly influence customer satisfaction and retention

- Assess risk exposure related to compliance, quality, or service reliability

- Compare improvement opportunities based on strategic impact rather than local efficiency

By aligning workflow metrics with organizational goals, measurement becomes a decision-making tool rather than an operational afterthought. Leaders gain a clearer basis for investment, teams understand why improvements matter, and optimization efforts remain focused on outcomes that drive long-term performance.

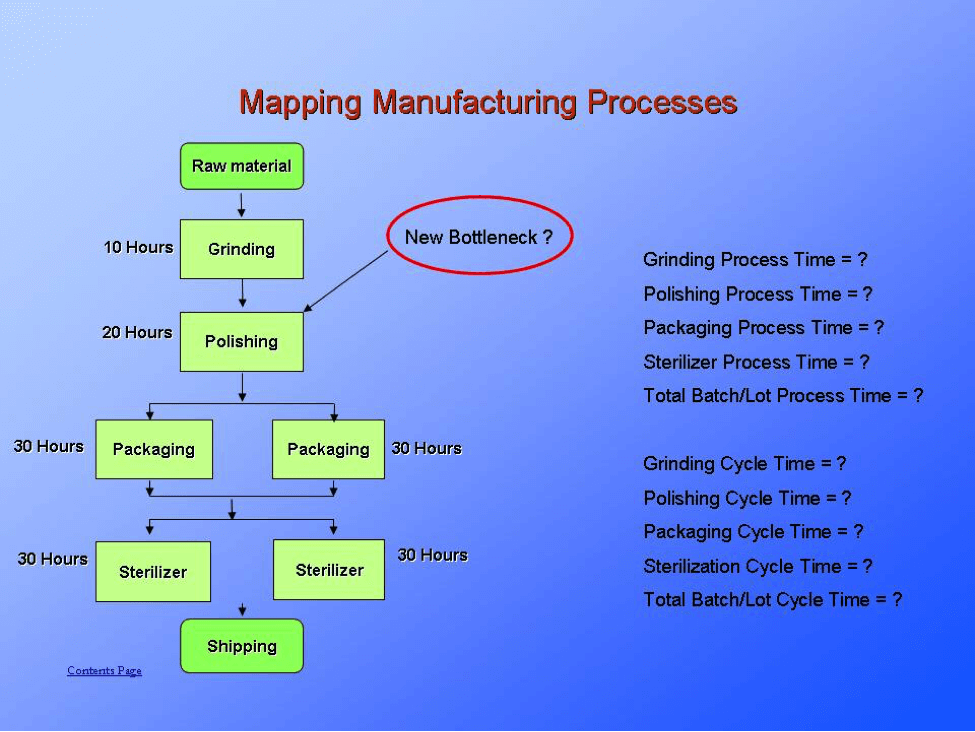

Identifying Bottlenecks and Root Causes

Performance data often reveals patterns: consistent delays at certain steps, recurring errors after specific handoffs, or uneven workloads across roles. These patterns point to bottlenecks—constraints that limit overall workflow performance.

However, addressing bottlenecks requires understanding root causes. Delays may stem from unclear decision authority, mismatched capacity, or outdated systems rather than individual behavior. Root cause analysis techniques help uncover these structural issues.

“Focusing on root causes ensures that improvements address underlying constraints rather than temporary symptoms, leading to more durable performance gains,” says Julia Rueschemeyer, Attorney at Amherst Divorce.

Improving Workflows: Designing for Flow and Simplicity

Effective workflow improvement prioritizes flow—the smooth progression of work from start to finish with minimal interruption. This typically involves eliminating non-value-adding activities, clarifying ownership, and reducing unnecessary variation.

In practice, effective workflow improvements often involve:

- Removing redundant approvals and reviews

- Clarifying ownership at each stage of the process

- Reducing unnecessary handoffs

- Standardizing core steps while managing exceptions deliberately

These changes improve speed and reliability without introducing excessive control or rigidity.

Balancing Control and Flexibility in Workflow Design

One of the most difficult challenges in workflow optimization is finding the right balance between control and flexibility. Too much control leads to rigidity, slow decision-making, and disengaged teams. Too much flexibility results in inconsistency, risk exposure, and unpredictable outcomes.

Well-designed workflows establish clear standards for common scenarios while allowing defined exceptions for edge cases. This approach preserves speed without sacrificing governance. Decision rights should be explicit, and escalation paths should be simple and visible.

By designing workflows that are structured but adaptable, organizations can respond to change without constantly redesigning their operating model.

Managing Change and Adoption During Workflow Improvements

Even the most thoughtfully designed workflows fail if they are not adopted in practice. Change management is therefore not a supporting activity, but a core component of any workflow improvement effort. When teams do not understand the purpose behind changes, new workflows are often perceived as additional bureaucracy rather than performance enablers.

“Successful adoption begins with context. Teams must clearly understand why a workflow is changing, what problems the change is intended to solve, and how it improves outcomes for both the organization and the individuals doing the work,” says Tal Holtzer, CEO of VPSServer. Without this shared understanding, resistance tends to surface in subtle ways—workarounds, partial compliance, or reversion to old habits.

Clear communication, practical training, and phased implementation significantly reduce disruption. Rather than introducing sweeping changes all at once, effective organizations sequence improvements, allowing teams to build confidence and capability over time. This approach also makes it easier to identify unintended consequences early and adjust before issues scale.

In practice, strong adoption efforts typically include:

- Clear articulation of the business rationale behind workflow changes

- Role-specific training focused on real work scenarios

- Phased rollouts that limit operational risk and disruption

- Feedback channels that allow teams to raise issues and suggest refinements

- Visible leadership support that reinforces the importance of the new workflow

When adoption is treated as part of the workflow design process—not an afterthought—teams are more likely to engage constructively with change. Over time, workflows shift from being perceived as imposed structures to becoming shared enablers of performance, alignment, and accountability across the organization.

The Role of Technology in Workflow Improvement

Technology can significantly improve workflow performance, but only when applied with intent. Introducing automation before understanding and simplifying a process often accelerates inefficiencies rather than resolving them. Technology should reinforce well-designed workflows, not compensate for unclear ones.

Automation and Operational Efficiency

Automation delivers the most value when applied to repetitive, rule-based tasks that require consistency rather than judgment. Activities such as data synchronization, notifications, and basic validations can be automated to reduce manual effort and error rates. This allows teams to focus on higher-value work such as analysis, decision-making, and customer engagement.

Visibility, Measurement, and Decision Support

Beyond automation, technology enables real-time visibility into workflow performance. Dashboards and alerts help leaders monitor cycle times, bottlenecks, and exceptions as they occur. However, visibility must be purposeful. Tracking too many metrics creates noise and slows decision-making. Effective systems surface only the information needed to support timely, accountable action.

When used deliberately, technology strengthens execution and scalability. When applied without clarity, it adds complexity and obscures the problems it is meant to solve.

Sustaining Improvement Through Governance and Ownership

Workflow optimization is not a one-time initiative or a transformation project with a fixed end date. As organizations grow, enter new markets, adopt new technologies, or respond to regulatory change, workflows must continuously evolve. Without deliberate governance, even well-designed processes gradually degrade as exceptions accumulate and informal workarounds take hold.

Sustaining improvement requires clear ownership. Each core workflow should have a designated owner with end-to-end accountability for performance, documentation, and ongoing refinement. This role ensures that workflows are managed as systems rather than collections of disconnected tasks, and that changes are evaluated based on their impact across teams.

Regular, structured reviews play a critical role in preventing drift. These reviews assess performance trends, emerging bottlenecks, and alignment with strategic priorities. When conducted consistently, they help organizations identify issues early and make incremental adjustments before problems become systemic.

Equally important is cultural reinforcement. When workflow thinking is embedded into how teams plan, execute, and evaluate work, optimization becomes a shared responsibility rather than a centralized effort. Over time, this mindset shifts workflow improvement from a periodic initiative into a durable organizational capability—one that supports resilience, scalability, and long-term performance.

Using Workflow Insights to Drive Continuous Improvement

Workflow optimization should not end once initial improvements are implemented. The most effective organizations treat workflow data as a continuous source of insight rather than a one-time diagnostic tool.

Performance trends, exception rates, and cycle-time fluctuations often signal emerging issues before they become visible problems. Regularly reviewing these signals enables teams to make small, incremental adjustments that prevent larger disruptions.

Over time, this feedback-driven approach shifts workflow improvement from reactive problem-solving to proactive performance management—embedding continuous improvement into daily operations rather than periodic transformation projects.

Aligning Workflows With Strategic Objectives

Optimized workflows must directly support an organization’s strategic intent. A growth-focused strategy may require workflows that prioritize speed, scalability, and responsiveness, while a compliance-driven strategy may emphasize control, traceability, and risk management. Without deliberate alignment, even well-optimized workflows can pull the organization in the wrong direction.

Alignment begins by translating high-level strategic goals into clear operational requirements. Leaders must ask how strategy should influence day-to-day execution—what behaviors workflows should encourage, what outcomes they must consistently deliver, and where trade-offs are acceptable. When these requirements are explicit, workflow improvements reinforce long-term objectives rather than unintentionally undermining them.

End-to-end thinking is essential to maintaining this alignment. Optimizing individual steps or departments in isolation often creates downstream inefficiencies, shifting cost or complexity rather than eliminating it. Viewing workflows as integrated systems ensures that local improvements contribute to overall performance, customer experience, and strategic outcomes.

When workflows are continuously evaluated through a strategic lens, they become more than operational mechanisms. They serve as practical expressions of strategy—guiding execution, enabling consistency, and helping the organization adapt without losing focus.

Conclusion

Mapping, measuring, and improving core business workflows is not about incremental efficiency alone; it is about building the operational foundation for sustainable performance. Clear workflows reduce friction, improve decision-making, and enable consistent execution in complex environments.

Organizations that treat workflows as strategic assets gain visibility, alignment, and resilience. Measurement transforms intuition into insight, while disciplined improvement ensures that daily operations support long-term goals.

In an era defined by constant change, the ability to understand and refine how work flows is a decisive advantage. Businesses that invest in this capability position themselves to adapt, scale, and compete with confidence.